The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

Traders and portfolio managers over-rely on algorithmic trading signals and automated risk scoring, executing positions recommended by models without cross-checking against fundamental analysis or current market context, which can amplify losses during unprecedented market conditions the model wasn't trained on.

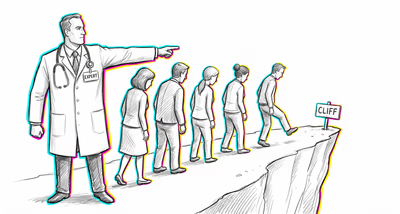

Medicine & diagnosis

Clinicians accept clinical decision support system diagnoses or drug interaction alerts without independent verification, leading to commission errors when the system gives incorrect recommendations and omission errors when the system fails to flag genuine risks, particularly dangerous with electronic health records where prior data-entry errors get perpetuated as 'authoritative.'

Education & grading

Teachers and administrators defer to automated grading systems, plagiarism detectors, or learning analytics dashboards without critically evaluating edge cases, resulting in false plagiarism accusations or misidentification of students' learning needs when the system's pattern-matching fails on atypical student work.

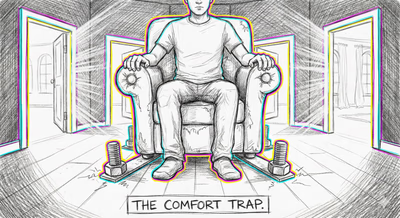

Relationships

People defer to dating app compatibility algorithms or relationship-assessment tools over their own intuitive sense of connection, dismissing genuine chemistry with someone who scores poorly on a matching algorithm or persisting with a poor match because the app said they were '95% compatible.'

Tech & product

Engineers and product teams accept automated test suite results, CI/CD pipeline approvals, and monitoring dashboards as definitive proof of system health, skipping manual code review or exploratory testing, which allows subtle bugs and regressions to ship when the automated checks have blind spots.

Workplace & hiring

Managers rely on automated performance metrics, employee monitoring software, and AI-generated performance reviews without seeking qualitative input, resulting in mischaracterization of employees whose work doesn't map neatly to tracked metrics and perpetuating initial scoring biases across review cycles.

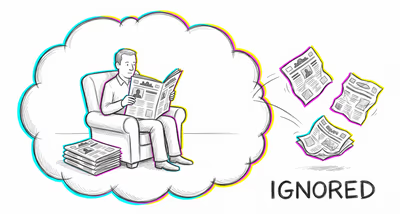

Politics Media

News consumers and journalists accept algorithmically curated trending topics, automated fact-check labels, and AI-generated summaries as accurate representations of events without consulting primary sources, which allows algorithmic biases in content ranking to shape public perception of issue importance and credibility.