The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

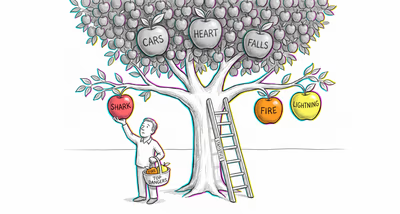

Investors and institutions underestimate the probability of market crashes, asset bubbles bursting, or systemic failures because extended periods of stability make catastrophic scenarios feel implausible. This leads to underhedging, overleveraging, and delayed responses to deteriorating economic indicators.

Medicine & diagnosis

Patients delay seeking medical attention for worsening symptoms because they have historically been healthy, interpreting alarming signs as benign. Clinicians may also underestimate the severity of emerging epidemics or rare diagnoses because their daily experience is dominated by routine cases.

Education & grading

School administrators underprepare for crises such as active shooter events, severe weather, or pandemics because such events have never occurred at their institution. Fire drills and lockdown procedures are treated as bureaucratic formalities rather than rehearsals for genuinely possible emergencies.

Relationships

People remain in deteriorating or abusive relationships far longer than warranted because they normalize escalating warning signs, assuming the relationship will return to how it was during better times. Red flags are reframed as temporary rough patches.

Tech & product

Development teams underinvest in disaster recovery, backup systems, and security hardening because their infrastructure has never experienced a catastrophic failure. Legacy systems remain unpatched because breaches have never occurred, creating mounting technical debt.

Workplace & hiring

Organizations fail to create contingency plans for key-person departures, supply chain disruptions, or regulatory shifts because operations have been stable. Safety protocols in industrial settings become lax when no accidents have occurred for extended periods.

Politics Media

Governments and publics underreact to intelligence warnings about terrorist threats, emerging pandemics, or geopolitical escalation because such events seem remote from daily experience. Media coverage of early warning signs is minimal until the crisis is already unfolding.