The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

Analysts who expect a company to outperform tend to interpret ambiguous earnings data more favorably, ask more optimistic questions during earnings calls, and write reports that selectively highlight confirming metrics — which can then influence investor behavior in ways that temporarily prop up the stock price, seemingly validating the original forecast.

Medicine & diagnosis

Physicians who suspect a particular diagnosis may unconsciously conduct more thorough examinations of relevant symptoms while overlooking contradictory signs, or ask leading questions that steer patients toward confirming the expected condition. In drug trials, unblinded investigators may rate subjective outcomes more favorably for the treatment group through differential warmth or attention.

Education & grading

Teachers who are told certain students are 'gifted' or 'at-risk' unconsciously adjust their teaching behavior — offering more wait time, richer feedback, and greater encouragement to expected high-performers while providing less challenging material and fewer opportunities to students expected to struggle, thereby widening achievement gaps that appear to validate the original labels.

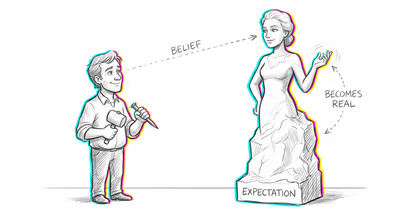

Relationships

When someone is told by mutual friends that a new acquaintance is 'really warm and fun,' they approach that person with more openness and enthusiasm, which elicits genuinely warmer and more engaging behavior from the other person, seemingly confirming the description.

Tech & product

Product teams that expect a new feature to improve engagement may unconsciously design A/B tests with subtle advantages for the treatment group — better onboarding flows, more prominent placement, or more polished visuals — then attribute the resulting improvement to the feature itself rather than the differential treatment.

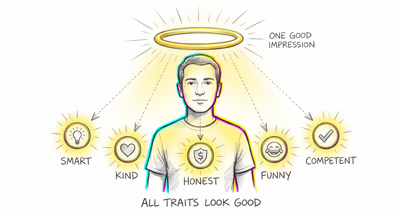

Workplace & hiring

Managers who have already formed an impression of an employee's potential unconsciously assign more visible projects, provide more developmental feedback, and advocate more strongly for those they expect to succeed, creating performance differentials that appear to confirm the manager's original assessment during reviews.

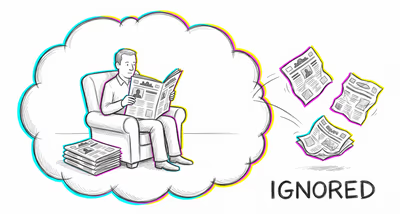

Politics Media

Pollsters and journalists who expect a certain electoral outcome may frame questions in ways that elicit confirming responses, selectively quote respondents who match their narrative, or give more airtime to evidence supporting their prediction — subtly shaping public opinion toward the expected result.