The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

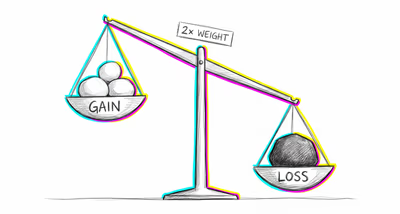

Investors hold onto declining assets rather than actively selling at a loss, because the realized loss from selling feels like a self-inflicted wound, while the paper loss from inaction feels like an external event happening to them. This contributes to the disposition effect and portfolio inertia.

Medicine & diagnosis

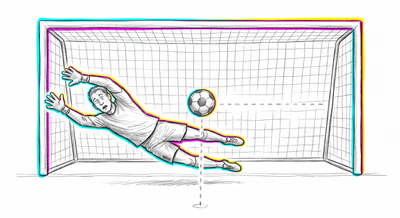

Physicians and patients systematically prefer watchful waiting over active treatment when both carry equivalent risk, because adverse outcomes from treatment are perceived as iatrogenic harm while adverse outcomes from non-treatment are perceived as the natural course of disease. This is particularly pronounced in vaccine hesitancy, where parents judge vaccine side effects as far worse than equivalent disease complications.

Education & grading

Teachers may avoid intervening when they notice a student being socially excluded, because actively restructuring group dynamics risks making things worse and being blamed for it, while passively allowing the exclusion to continue feels like a less culpable position.

Relationships

People avoid having difficult but necessary conversations — about boundaries, unmet needs, or dealbreakers — because actively raising the issue risks causing a fight they'd feel responsible for, while letting the relationship slowly deteriorate through inaction feels like something that 'just happened.'

Tech & product

Product teams resist removing underperforming features or sunsetting legacy products because actively discontinuing something users rely on feels more harmful than passively allowing technical debt and fragmented user experiences to accumulate. Default opt-in settings exploit this bias, knowing users rarely take the active step of opting out.

Workplace & hiring

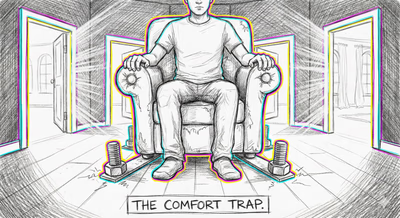

Managers delay firing poor performers, restructuring teams, or canceling failing projects because taking action that visibly harms someone feels worse than allowing the broader harm of inaction to continue invisibly. Performance review systems that require active ratings ('rate this person') face more resistance than those with default categories.

Politics Media

Legislators vote against policies that would save more lives than they cost (e.g., mandatory safety regulations) because the visible victims of regulation feel like the legislator's fault, while the statistical victims of non-regulation are attributed to circumstance. Media coverage reinforces this by extensively covering harms caused by government action while under-reporting harms caused by government inaction.