The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

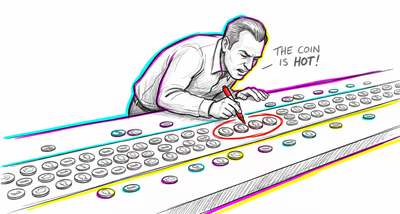

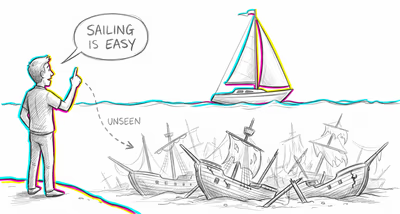

Investors and analysts frequently back-test trading strategies across decades of market data, then highlight the specific combination of indicators that would have yielded the highest returns — without accounting for the thousands of indicator combinations that failed. This leads to overfitted models that perform well historically but collapse on new data.

Medicine & diagnosis

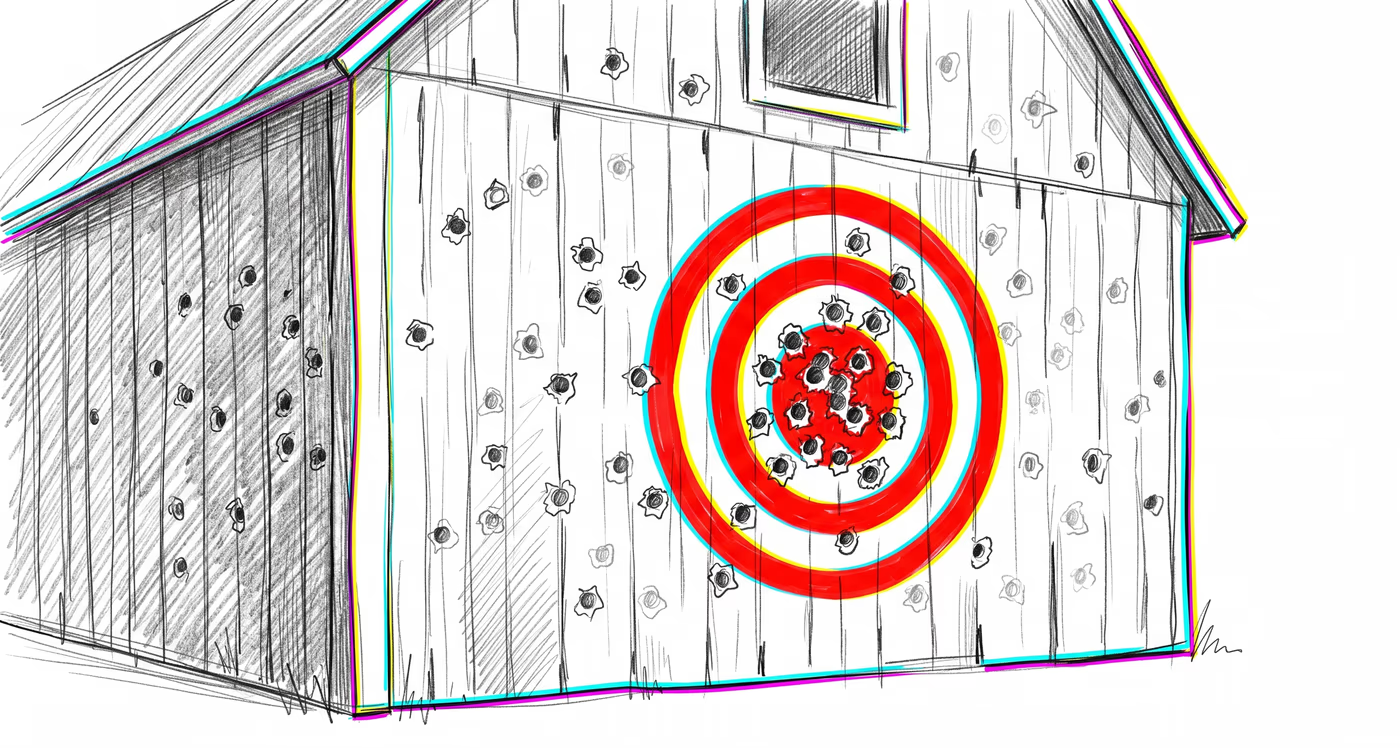

Epidemiologists face constant pressure from communities reporting apparent disease clusters. When boundaries are drawn around observed cases after the fact rather than defined in advance, nearly any region can appear to have an abnormally high rate of illness, leading to false alarms, wasted resources, and public fear about non-existent environmental hazards.

Education & grading

Administrators may examine student performance data across many variables — teacher, classroom, time of day, curriculum version — and highlight whichever combination shows the best results as a 'proven approach,' ignoring the many other combinations that showed no effect and failing to validate the finding with new data.

Relationships

People sometimes review a partner's past behavior after discovering infidelity, selectively stringing together small moments that 'now make sense' as evidence of a pattern of deception, while ignoring the overwhelming number of interactions that were genuinely honest and loving.

Tech & product

Product teams running multivariate A/B tests across many user segments, features, and time windows will inevitably find some combination that shows a statistically significant result. Treating that finding as a validated insight without replication leads to feature changes that don't actually improve the product.

Workplace & hiring

HR departments may identify that several top performers share a particular trait — such as attending the same university or having a hobby in common — and build hiring criteria around it, ignoring the many employees with the same trait who performed poorly and the top performers who lack it entirely.

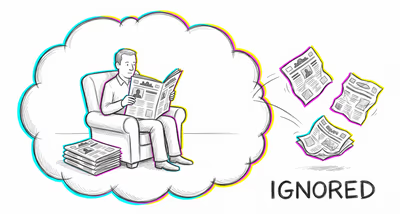

Politics Media

Pundits cherry-pick economic indicators, crime statistics, or polling data from specific time windows or regions that support their preferred political narrative, while ignoring the broader dataset that tells a more ambiguous or contradictory story.