The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

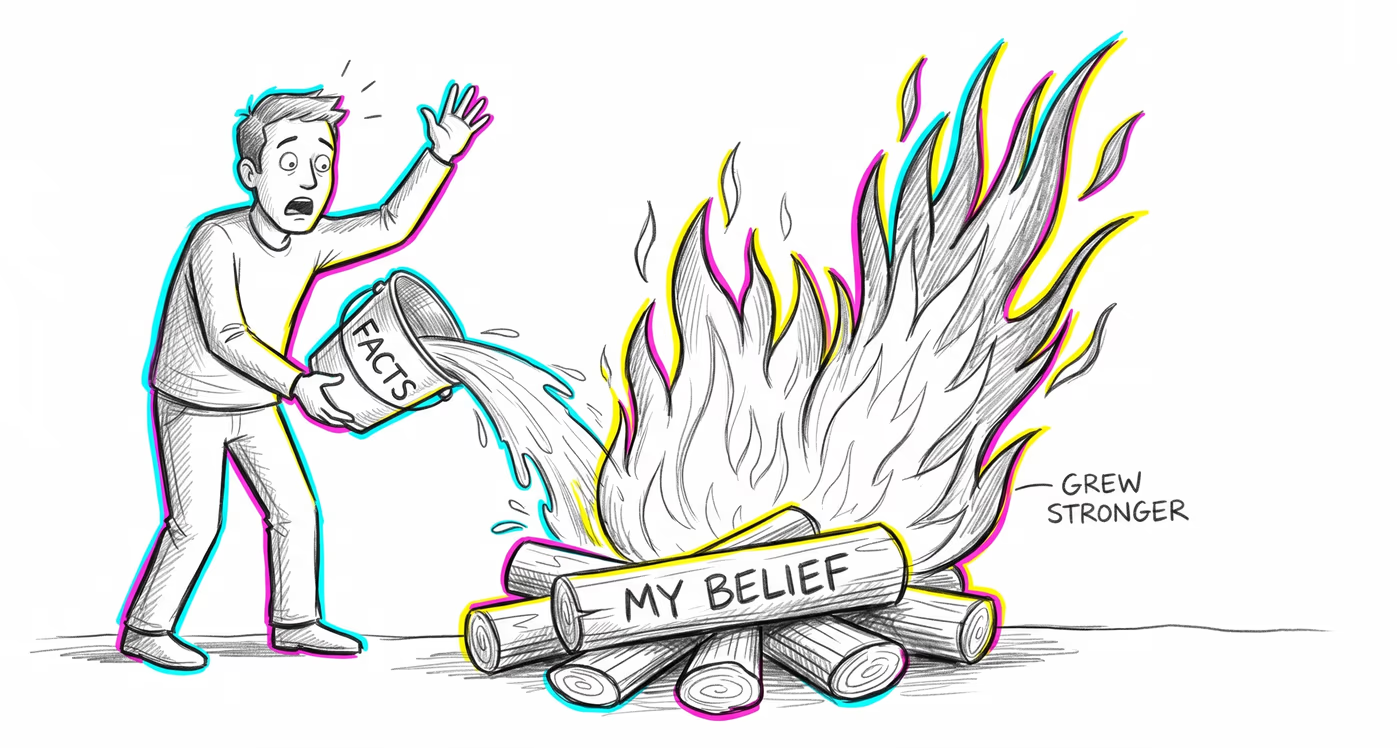

Investors who have publicly committed to a market thesis may react to disconfirming earnings reports or analyst downgrades by increasing their position size rather than reducing it, interpreting negative signals as temporary setbacks that validate their long-term conviction.

Medicine & diagnosis

Patients presented with clinical evidence contradicting their health beliefs — such as studies showing a favored supplement is ineffective — may dismiss the evidence as biased and become more committed to the treatment, sometimes reducing trust in their healthcare provider.

Education & grading

Students who hold strong misconceptions about scientific topics may emerge from corrective lessons with those misconceptions reinforced rather than resolved, particularly when the correction directly confronts the error without providing an alternative explanatory framework.

Relationships

When a friend or partner presents evidence that a person's romantic interest is not reciprocated — such as pointing out clear signs of disinterest — the person may reinterpret the evidence as proof of the other party 'playing hard to get' and pursue even more intensely.

Tech & product

When A/B test results show that a product team's favored design underperforms, team members who championed the design may question the methodology, sample size, or testing conditions rather than accept the data, and push to run the test again with modified parameters.

Workplace & hiring

Employees who receive critical performance feedback that contradicts their self-assessment may become more entrenched in their work habits and more vocal about their competence, viewing the feedback as evidence of the evaluator's misunderstanding rather than a signal to change.

Politics Media

Fact-checks of politically charged claims can cause partisans to increase their support for the corrected politician, viewing the fact-check itself as evidence of media bias or a coordinated attack, thereby deepening their original commitment.