The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

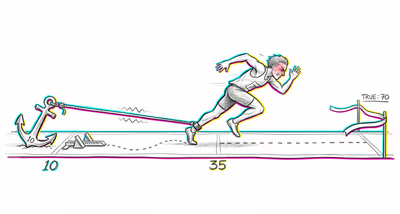

Investors systematically under-react to corporate events such as earnings announcements, dividend changes, and stock splits. When a company reports earnings substantially above or below expectations, stock prices adjust, but typically not enough — a pattern known as post-earnings-announcement drift — because investors fail to fully incorporate the magnitude of the new information into their valuation models, remaining anchored to prior estimates.

Medicine & diagnosis

Clinicians may cling to an initial diagnosis even as new test results and symptoms accumulate that point toward a different condition. The initial diagnostic impression becomes a cognitive anchor, and subsequent evidence is under-weighted, leading to delayed treatment adjustments. This is particularly dangerous in conditions with evolving presentations, such as cancers initially misidentified as benign conditions.

Education & grading

Teachers form early impressions of student ability based on initial assignments or classroom behavior. When students subsequently improve or decline in performance, teachers tend to adjust their expectations and grading patterns more slowly than the objective evidence warrants, perpetuating early assessments long after they have become inaccurate.

Relationships

People form initial impressions of a partner's character traits early in a relationship and then under-adjust those impressions when faced with contradicting evidence over time. A partner who was initially perceived as reliable may continue to receive the benefit of the doubt long after repeated unreliable behavior, because the prior belief about their character is slow to update.

Tech & product

Product teams anchor on initial user research or feature assumptions and fail to sufficiently pivot when A/B test results or usage analytics present strong contradicting signals. This leads to continued investment in features that data shows users are abandoning, because the team under-weights the new behavioral data relative to their original design thesis.

Workplace & hiring

Managers anchor on first impressions formed during onboarding or early performance reviews. When an employee's performance dramatically shifts — either improving or deteriorating — the manager's formal evaluations and promotion decisions lag behind the actual performance trajectory, reflecting the original assessment more than current reality.

Politics Media

Voters and media consumers form early impressions of political candidates or policies and then under-react to new information such as policy reversals, scandal revelations, or updated economic data. Polling shifts tend to be smaller than the magnitude of new events would predict, as partisans especially resist updating priors that conflict with their established political narratives.