The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

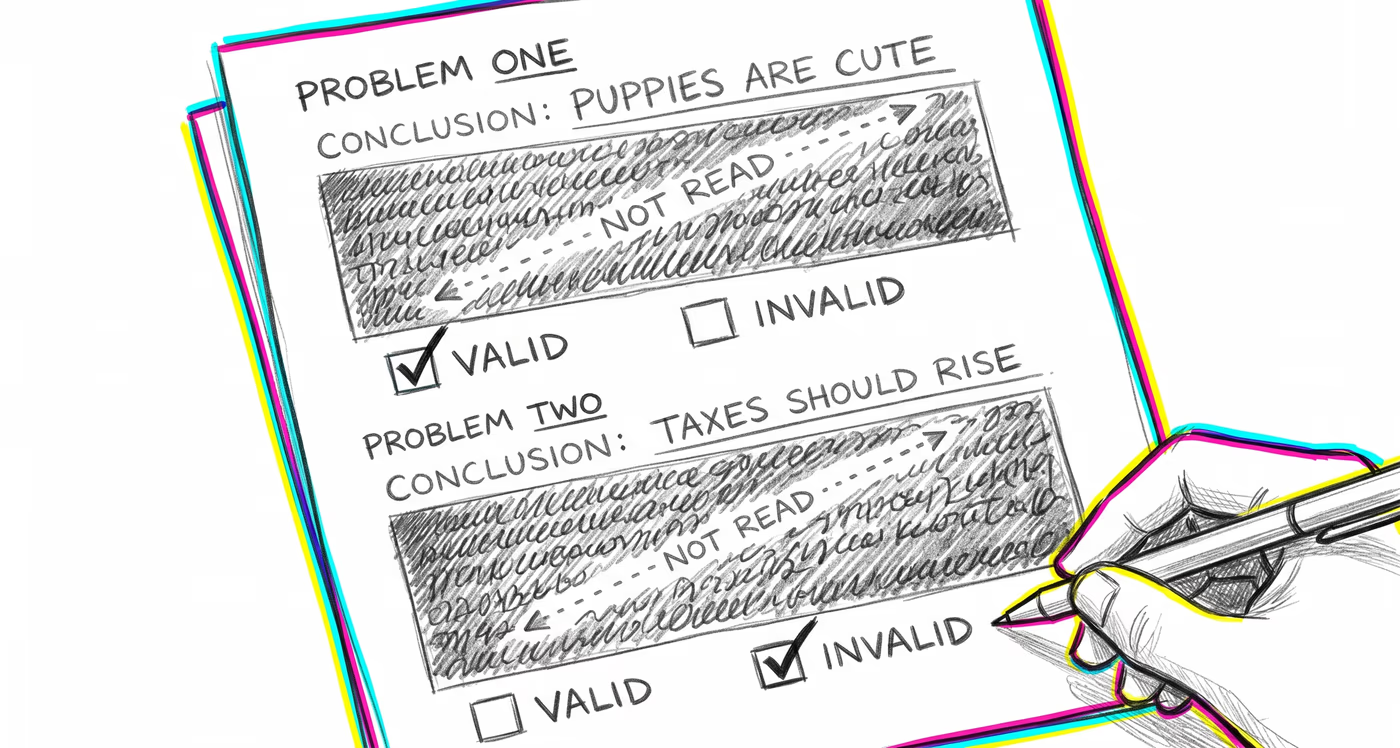

Investors accept bullish analyst reports with logically flawed reasoning when the conclusion matches their existing portfolio thesis, while dismissing well-structured bearish arguments because the predicted downturn feels implausible given recent market performance.

Medicine & diagnosis

Clinicians may accept a diagnostic reasoning chain that arrives at a familiar or expected diagnosis without verifying that each inferential step is sound, while being overly skeptical of logically valid reasoning that points to an unusual or rare condition.

Education & grading

Students evaluate the correctness of mathematical proofs or scientific arguments based on whether the conclusion matches their expectations rather than tracing the logical steps. Teachers may accept student essays with plausible-sounding conclusions without scrutinizing the quality of the supporting arguments.

Relationships

People accept logically weak justifications for a partner's behavior when the conclusion matches what they want to believe ('They must still love me because...'), while rejecting well-reasoned concerns from friends because the implied conclusion is emotionally unacceptable.

Tech & product

Engineers accept flawed architectural arguments for a technical approach when the proposed solution aligns with their preferred technology stack, while dismissing valid critiques of that approach because the suggested alternative feels unfamiliar or counterintuitive.

Workplace & hiring

During performance reviews, managers accept poorly reasoned justifications for promoting a favored employee because the conclusion feels right, while scrutinizing logically sound cases for promoting someone they personally like less.

Politics Media

Voters accept political arguments with structural fallacies when the candidate's conclusion aligns with their party's platform, and reject logically sound arguments from opposing candidates because the conclusions conflict with their worldview.