The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

Traders and investors frequently attribute intentionality to market movements, interpreting a stock price decline as 'the market punishing the company' or algorithmic trading patterns as deliberate manipulation, when these movements often reflect aggregate statistical dynamics without any coordinating agent.

Medicine & diagnosis

Patients commonly interpret bodily symptoms as their body 'fighting back' or 'sending a message,' and may attribute purposeful decision-making to diseases ('the cancer is clever'), which can distort treatment adherence when patients attempt to negotiate with or outsmart their illness rather than following evidence-based protocols.

Education & grading

Teachers may interpret a student's off-task behavior as deliberate defiance rather than the result of confusion, distraction, or executive function difficulties, leading to punitive responses when supportive scaffolding would be more effective.

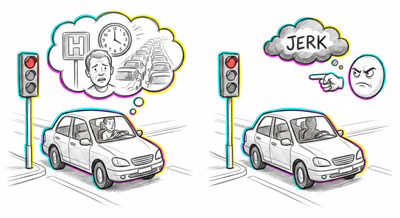

Relationships

Partners routinely over-attribute intentionality to each other's ambiguous behaviors—interpreting a forgotten anniversary as a deliberate signal of disinterest, or reading hostile intent into a neutral facial expression—escalating conflicts that originated from benign oversights.

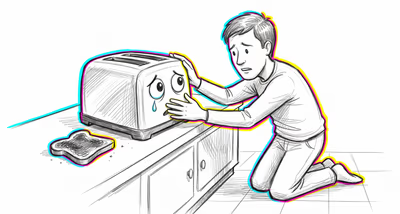

Tech & product

Users develop mental models of software as having goals and preferences, saying things like 'the app doesn't want me to find this setting.' This leads designers to anthropomorphize their own systems during development, attributing user-like motivations to algorithms and overlooking purely mechanical explanations for unexpected behavior.

Workplace & hiring

Employees commonly interpret organizational decisions—restructurings, policy changes, office moves—as personally targeted actions by management rather than systemic responses to business constraints, fueling resentment and conspiracy thinking within teams.

Politics Media

Voters and commentators attribute coordinated intentional strategies to political opponents' every misstep, interpreting gaffes, logistical errors, and bureaucratic inertia as deliberate schemes, which deepens partisan distrust and makes good-faith negotiation harder.