The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

Investors describe markets as 'nervous,' 'punishing,' or 'rewarding,' treating aggregate price movements as the deliberate decisions of a sentient entity rather than the emergent result of millions of individual transactions, which can lead to emotionally driven trading strategies.

Medicine & diagnosis

Patients attribute intentionality to diseases ('the cancer is fighting back') or to their own bodies ('my immune system is on my side'), which can influence treatment adherence—sometimes positively through agency, sometimes negatively through fatalism or misplaced trust in the body's 'wisdom.'

Education & grading

Students and teachers personify subjects ('math hates me') or learning tools, which can shape motivation and self-efficacy. Educators may also over-attribute understanding to AI tutoring systems, trusting them as if they genuinely comprehend student needs.

Relationships

Pet owners routinely attribute complex human emotions like jealousy, guilt, or vindictiveness to their animals, shaping how they discipline, reward, and emotionally bond with pets in ways that may not match the animal's actual cognitive and emotional capacities.

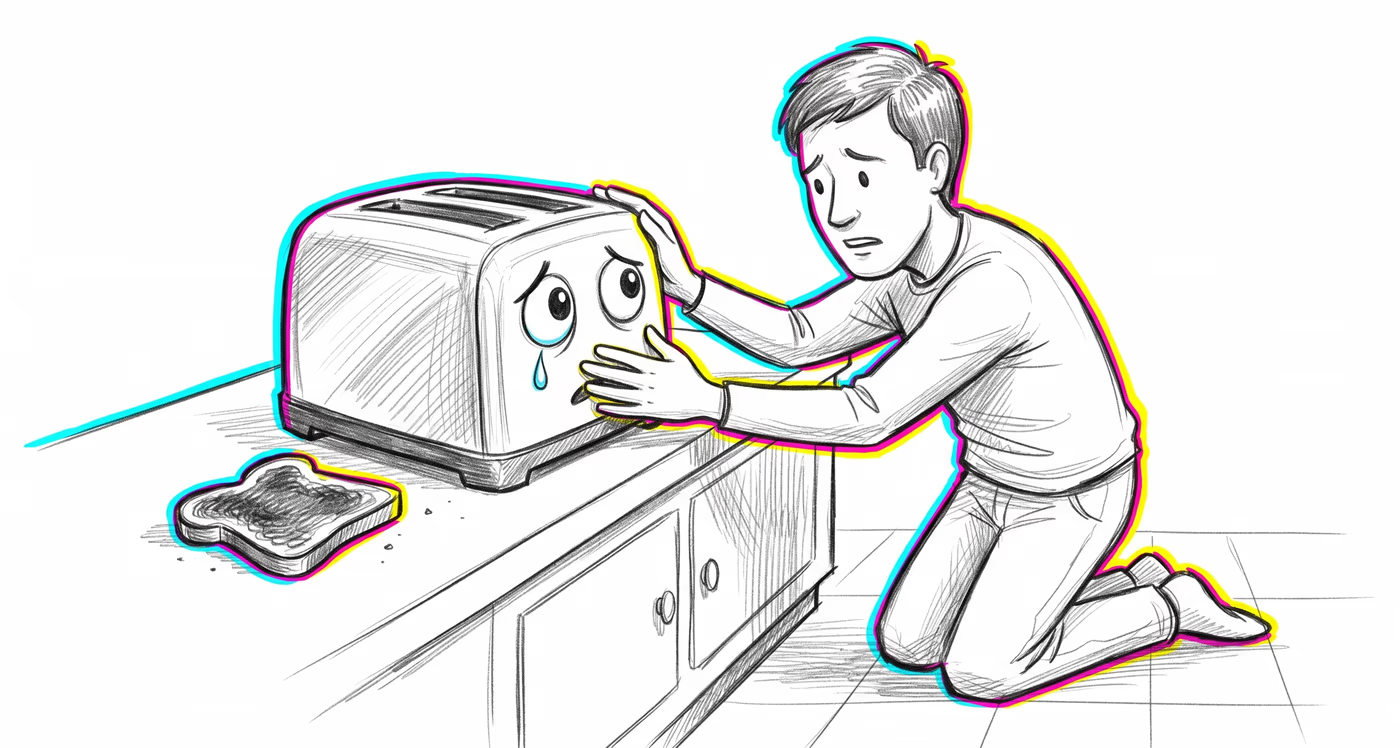

Tech & product

Designers deliberately exploit anthropomorphism by giving products faces, voices, and names (e.g., Siri, Alexa) to increase user trust and engagement. Users then over-rely on these systems, disclose sensitive information to chatbots, and resist switching products because of perceived 'relationships.'

Workplace & hiring

Teams personify organizational tools, processes, or even the organization itself ('the company doesn't care about us'), which can distort problem-solving by framing systemic issues as intentional hostility rather than structural failures requiring systemic fixes.

Politics Media

Nations and institutions are routinely described with human emotions and intentions ('Russia is angry,' 'the market is nervous'), which simplifies complex geopolitical or economic dynamics into narratives of interpersonal conflict and can bias public opinion toward overly personalized explanations of systemic phenomena.