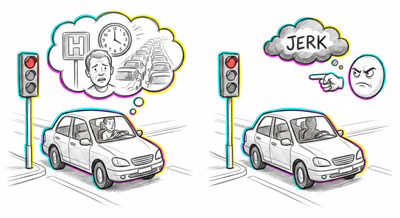

The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

Investors and fund managers are routinely evaluated based on portfolio returns rather than the soundness of their investment theses. A trader who made a highly leveraged, undiversified bet that happened to pay off is celebrated as skilled, while a disciplined risk manager whose diversified portfolio underperformed due to a black swan event is penalized — leading to systematic reward of reckless strategies and punishment of prudent ones.

Medicine & diagnosis

Physicians and surgeons are frequently judged by patient outcomes rather than by whether their clinical reasoning was appropriate given the available evidence. A surgeon who opts for a procedure with an 80% success rate is evaluated differently depending on whether the patient lives or dies, despite the decision being identical. This can lead to defensive medicine, where doctors avoid risky-but-appropriate interventions to protect their reputation.

Education & grading

Teachers and instructors are often evaluated by student test scores or graduation rates rather than the quality of their pedagogical methods. An unconventional teaching approach that happens to produce high scores is deemed effective, while a well-designed curriculum that yields average scores in a particularly challenging cohort is deemed failing — discouraging pedagogical innovation.

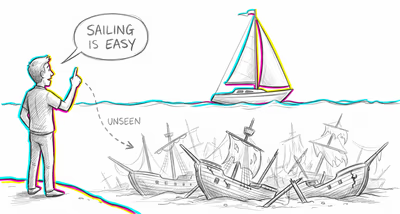

Relationships

People judge their partner's past decisions based on how things turned out rather than the reasoning at the time. A partner who encouraged a career change that didn't work out is blamed for 'bad advice,' while identical encouragement that preceded a windfall is remembered as wise support — creating an unfair scorecard in the relationship.

Tech & product

Product teams that ship features which happen to gain traction are celebrated as visionary, while teams whose well-researched features flop due to market timing or external factors are restructured. This leads organizations to mythologize luck as strategy and to over-index on copying past successes rather than building robust decision processes.

Workplace & hiring

Performance reviews heavily weight measurable outcomes like sales numbers or project completion over the quality of the employee's process, collaboration, and decision-making under uncertainty. Employees who benefited from favorable market conditions or inherited strong pipelines receive promotions, while those who managed difficult situations skillfully but with less visible results are overlooked.

Politics Media

Political leaders are judged almost entirely by outcomes that are often beyond their control. A president whose term coincides with economic growth driven by global trends is rated as competent, while one who governs during an inherited recession is deemed incompetent. Media reinforces this by constructing post-hoc narratives that treat outcomes as direct evidence of leadership quality.