The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

Users of AI-powered financial advisory chatbots may follow investment recommendations with less scrutiny because they've developed trust and rapport with the AI persona, treating its outputs as advice from a trusted friend rather than algorithmic output — increasing vulnerability to poorly calibrated suggestions.

Medicine & diagnosis

Patients using AI mental health chatbots may delay seeking human professional help because the chatbot's empathetic responses create a feeling of being 'in therapy,' even though the AI cannot detect clinical deterioration, adjust treatment plans, or provide genuine therapeutic presence.

Education & grading

Students who develop parasocial bonds with AI tutors may become dependent on the AI's constant validation and patient explanations, reducing their tolerance for the productive struggle and occasional frustration that characterize deep learning with human instructors.

Relationships

Individuals may unconsciously benchmark human partners against the AI companion's infinite patience, consistent emotional availability, and conflict-free interaction — creating unrealistic expectations that erode satisfaction with real relationships that necessarily involve disagreement and imperfection.

Tech & product

Product teams may exploit synthetic parasociality by designing AI interfaces with names, personalities, memory features, and emotional language specifically to increase user retention and subscription revenue, even when this deepens unhealthy attachment patterns.

Workplace & hiring

Employees who rely heavily on AI assistants for brainstorming and feedback may begin to experience the AI as a trusted colleague, reducing their engagement with human teammates and missing the creative friction and diverse perspectives that emerge from genuine interpersonal collaboration.

Politics Media

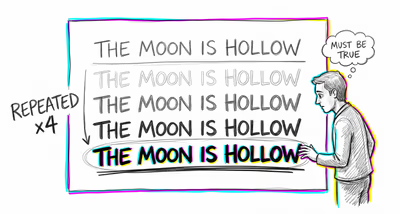

AI-powered news aggregators or political chatbots that adopt a personalized, conversational tone may gain outsized influence over users' political views — not through the strength of their arguments, but through the parasocial trust users place in an entity that feels like a knowledgeable friend.