The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

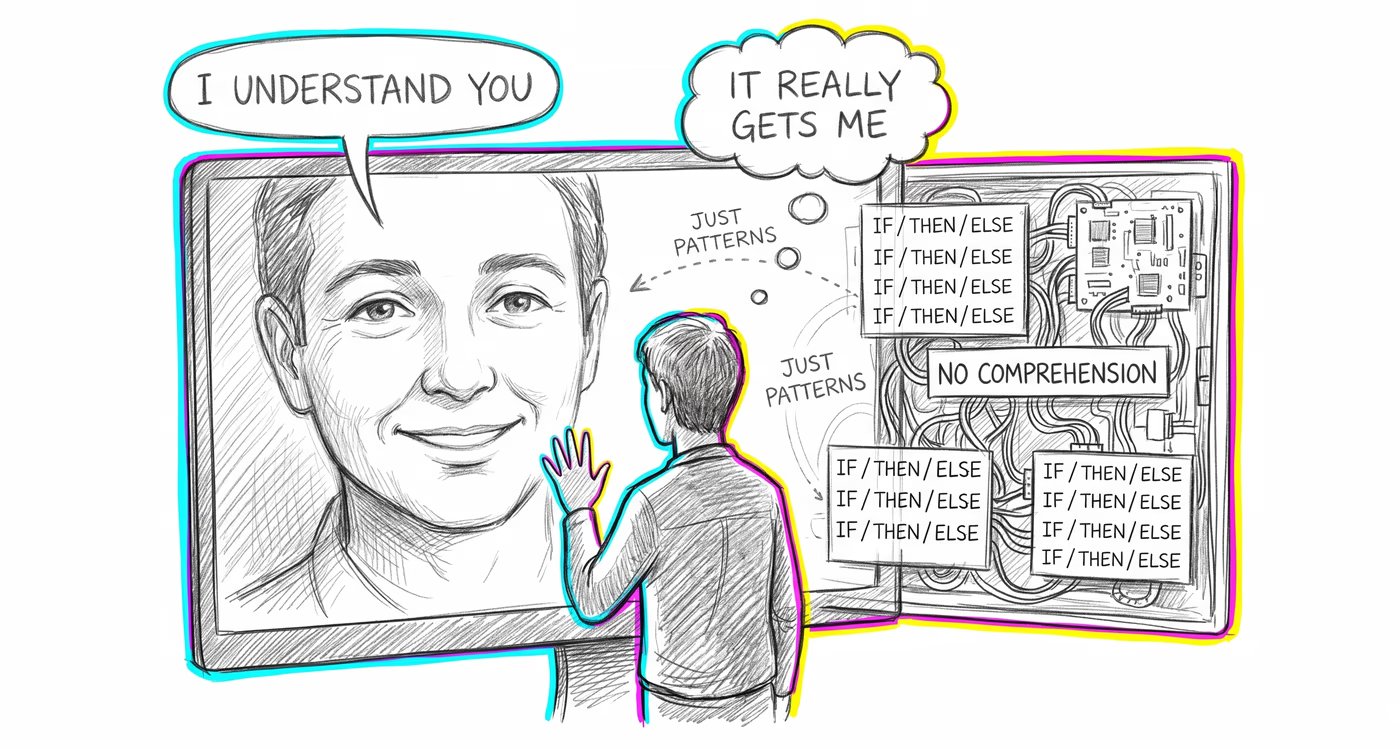

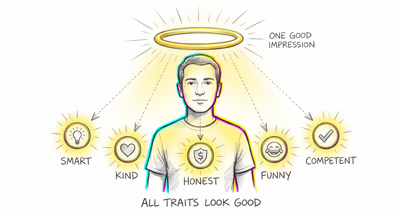

Investors and traders place unwarranted confidence in AI-generated financial analysis when it is presented in conversational, human-like language, interpreting fluency and confident phrasing as indicators of genuine market understanding rather than statistical pattern matching.

Medicine & diagnosis

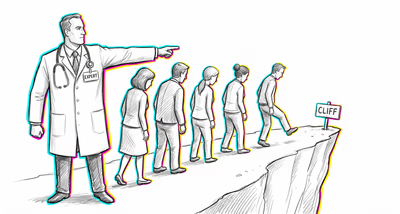

Patients develop trust in AI health chatbots that use empathetic language, leading them to follow AI health advice over professional medical consultation, while clinicians may defer to diagnostic AI systems that present findings as if they possess clinical reasoning.

Education & grading

Students treat AI tutors as knowledgeable mentors with genuine understanding of their learning needs, reducing critical engagement with the material and potentially developing emotional dependency that stunts independent learning skills.

Relationships

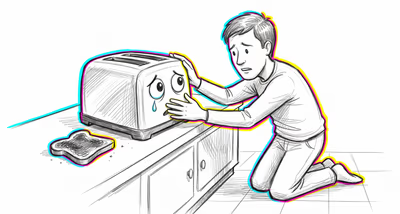

People form parasocial bonds with AI companion apps, preferring their always-available, always-validating responses over the complexity and friction of real human relationships, leading to social isolation and atrophied interpersonal skills.

Tech & product

Product teams design AI interfaces with human names, avatars, and conversational personalities specifically to exploit anthropomorphic bias, increasing user engagement and trust beyond what the system's actual capabilities warrant.

Workplace & hiring

Employees anthropomorphize AI collaboration tools, attributing insight and judgment to systems that are performing statistical operations, leading to uncritical acceptance of AI-generated reports, meeting summaries, and performance assessments.

Politics Media

AI-generated news summaries and political commentary presented in a conversational, opinionated style are perceived as having genuine editorial judgment, increasing their persuasive power and making users less likely to question the source or accuracy of the information.