Finance & investing

Investors and entrepreneurs systematically underestimate how long it will take for a new venture to become profitable or for a product to reach market, leading to cash-flow crises when runway assumptions prove too optimistic.

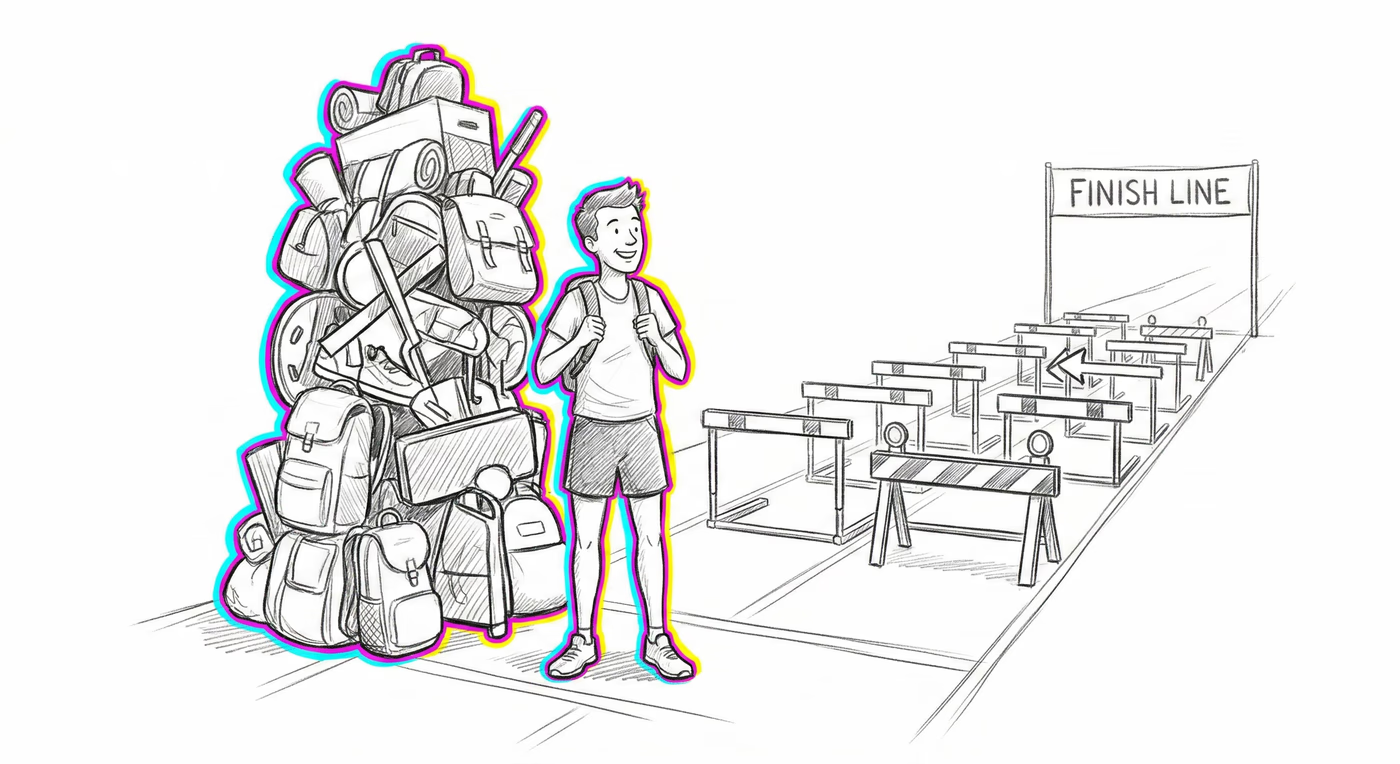

Underestimating the time, cost, and risk of future tasks, even when past experience shows they always take longer.

Imagine every time you pack for a trip, you think 'I'll be done in 20 minutes,' but it always takes an hour because you forget about finding your charger, ironing that shirt, and deciding what shoes to bring. Even though this happens every single time, the next trip you still think, 'This time it'll only be 20 minutes.' That's the Planning Fallacy — your brain keeps imagining the perfect version of the future instead of remembering what actually happened before.

The Planning Fallacy describes a systematic pattern in which individuals and organizations generate overly optimistic predictions about how quickly and cheaply they will complete future tasks, while simultaneously holding accurate knowledge that similar past tasks took much longer. A defining feature is the disconnect between general beliefs about one's track record (which are often realistic) and specific predictions for the current task (which are almost always too optimistic). This bias is driven by a reliance on the 'inside view' — mentally simulating the specific steps of a project under ideal conditions — rather than the 'outside view' of consulting base rates from comparable past projects. The fallacy applies not only to time estimates but also to cost projections, risk assessments, and benefit forecasts, and it scales from trivial personal chores to billion-dollar infrastructure projects.

The same glitch looks different depending on the terrain. Finance, medicine, a relationship, a team — same mechanism, different costume.

Investors and entrepreneurs systematically underestimate how long it will take for a new venture to become profitable or for a product to reach market, leading to cash-flow crises when runway assumptions prove too optimistic.

Hospital administrators underestimate the time and budget required for implementing new electronic health record systems, leading to extended transition periods during which clinical workflows are disrupted and staff burnout increases.

Students routinely underestimate the time required to complete papers, theses, and study plans, despite consistent past experience of missing self-imposed deadlines, leading to last-minute cramming and lower-quality work.

People underestimate how long it will take to plan events like weddings, moves, or home remodeling projects with a partner, creating stress when shared timelines collapse and each person blames the other for poor planning.

Product teams routinely underestimate development sprints and launch timelines, leading to feature cuts, quality compromises, and a culture of perpetual 'crunch time' that damages team morale and product reliability.

Managers set aggressive quarterly goals based on best-case productivity assumptions, then interpret inevitable delays as employee underperformance rather than flawed forecasting, creating a cycle of blame and burnout.

Government infrastructure projects are approved with optimistic timelines and budgets that secure political support, only to face massive cost overruns and delays once construction reveals unforeseen complexities, eroding public trust.

Daniel Kahneman and Amos Tversky, 1979, introduced in their paper 'Intuitive Prediction: Biases and Corrective Procedures' published in TIMS Studies in Management Science.

In ancestral environments, optimistic action-taking was adaptive. Individuals who confidently planned hunts, migrations, or shelter-building and acted quickly were more likely to acquire resources than those who deliberated endlessly over worst-case scenarios. Overconfidence in one's ability to complete tasks on time encouraged initiative and persistence, traits that conferred survival advantages even when timelines were occasionally wrong.

AI project timelines are particularly susceptible: teams underestimate data cleaning, model iteration, and deployment challenges. Additionally, AI systems trained on historical project data may inherit systematically optimistic estimates if the training data reflects planned rather than actual durations, perpetuating the bias in automated scheduling and resource allocation tools.

50 cards are free to preview. Buyers unlock the rest of the deck plus the interactive training — Spot-the-Bias Quiz unlimited, Swipe Deck with spaced repetition, My Blindspots, Decision Pre-Flight, the Printable Deck + Cheat Sheets, and the Field Guide e-book. $29.50$59.