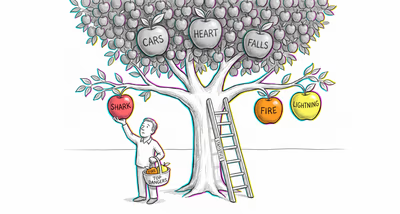

The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

Investors and portfolio managers frequently override quantitative models after a single losing quarter, reverting to discretionary stock-picking that statistically underperforms index-tracking algorithms. The aversion is strongest after market downturns, when the emotional sting of algorithmic losses feels less tolerable than equivalent human-made losses.

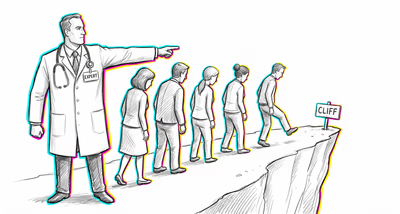

Medicine & diagnosis

Patients and physicians resist AI-assisted diagnostics—especially in radiology, dermatology, and pathology—despite evidence that these systems match or exceed specialist accuracy. The resistance intensifies for life-or-death decisions, where people feel that only a human can bear moral responsibility for an error.

Education & grading

Teachers and administrators resist algorithmic tools for student placement, grading, or early-warning dropout detection, believing that human intuition captures nuances that data cannot. This persists even when algorithmic predictions of student success are demonstrably more accurate than teacher judgment.

Relationships

People strongly prefer human matchmakers or their own intuition over dating algorithms, viewing romantic compatibility as inherently subjective and resistant to quantification. Even users of dating apps may distrust the matching algorithm while trusting their own profile-swiping instincts, which are often driven by superficial cues.

Tech & product

Users abandon recommendation engines, spam filters, or autocomplete features after a single salient failure, reverting to manual processes that are slower and less accurate. Product teams struggle with the paradox that making algorithmic errors more visible (for transparency) can accelerate user abandonment.

Workplace & hiring

HR departments resist algorithmic resume screening or performance evaluation tools after any publicized error, preferring interview-based assessments that introduce well-documented biases like the halo effect. Managers distrust algorithmic scheduling and forecasting tools the moment they produce a visibly wrong output.

Politics Media

Voters and the public distrust algorithmic content curation and moderation on social media, perceiving automated decisions about what news to show or which content to remove as lacking nuance and fairness—even when human moderators make similar or more frequent errors. This distrust fuels demands for 'human oversight' regardless of comparative accuracy.