The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

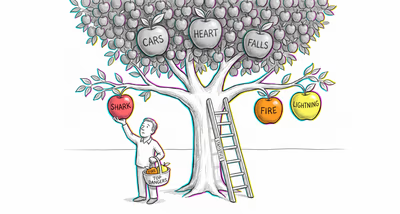

Investors overweight a company's vivid narrative — charismatic CEO, flashy product launch — while ignoring the base rate of startup failure or industry-wide default rates, leading to overvaluation of individual stocks and underestimation of portfolio risk.

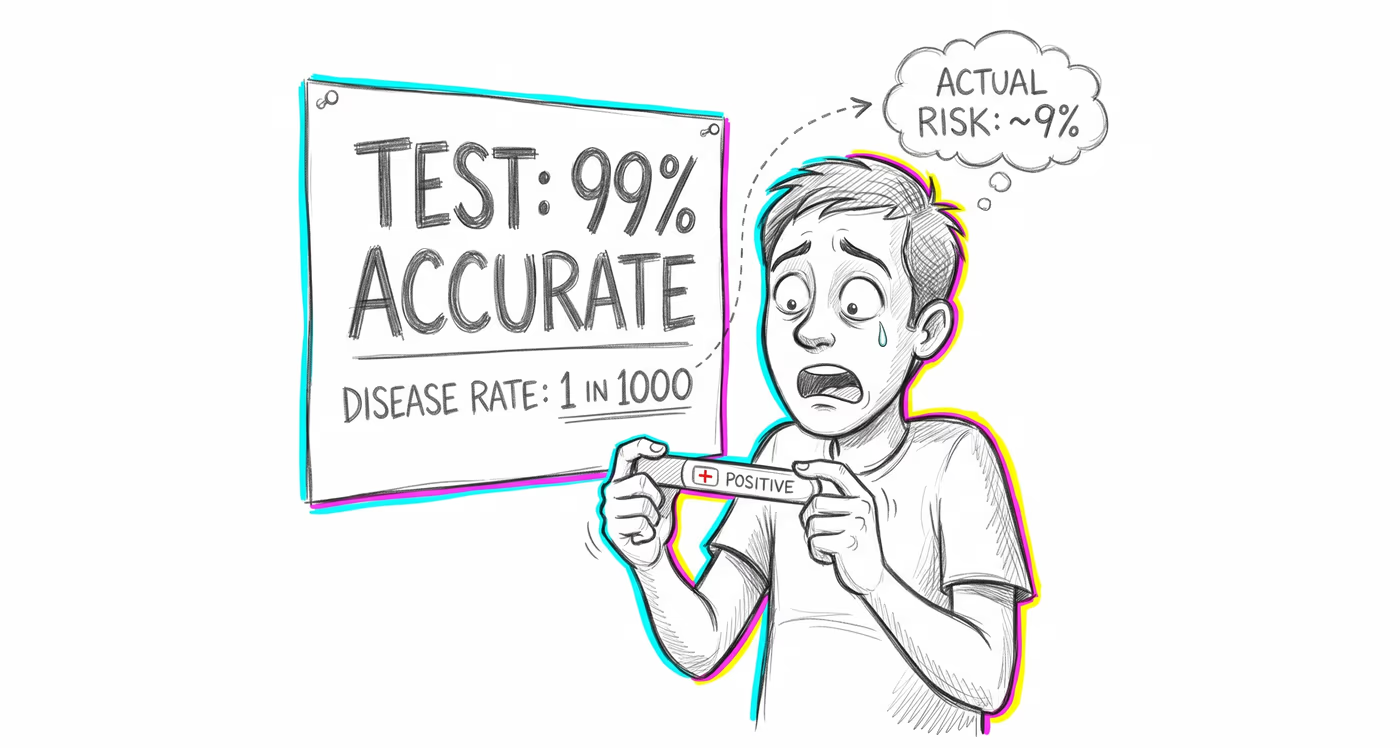

Medicine & diagnosis

Physicians and patients routinely misinterpret positive screening results for rare diseases. A 95% accurate test for a condition affecting 1 in 1,000 people yields far more false positives than true positives, yet both doctors and patients frequently assume a positive result means near-certain diagnosis.

Education & grading

Teachers assess a student's potential based on vivid behavioral cues (articulateness, curiosity) that match a 'gifted' stereotype, without factoring in the low base rate of giftedness in the general student population, leading to biased placement recommendations.

Relationships

People judge a new romantic partner's trustworthiness based on a single compelling story or gesture, ignoring the base rate of how most people behave in similar situations — for example, assuming a partner who once lied about something small is very likely to be a habitual liar.

Tech & product

Spam filters and fraud detection systems with high accuracy rates still produce enormous numbers of false positives when the base rate of actual spam or fraud is very low, frustrating users whose legitimate transactions get flagged. Product teams often focus on improving detection accuracy without addressing the base rate problem.

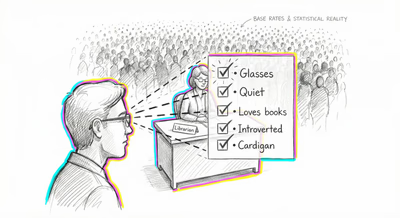

Workplace & hiring

Hiring managers given a structured personality profile of a candidate focus on how well it matches their mental prototype of a 'star performer,' ignoring that star performers are statistically rare, meaning most candidates who fit the profile will still perform at average levels.

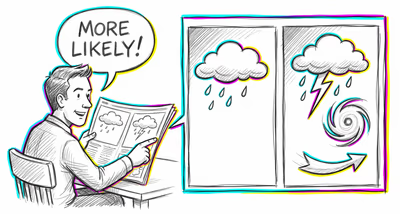

Politics Media

Media coverage of rare but dramatic events (terrorist attacks, mass shootings) causes the public to vastly overestimate the likelihood of these events while ignoring far more common causes of harm. Policy responses then disproportionately fund low-base-rate threats at the expense of higher-base-rate risks.