The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

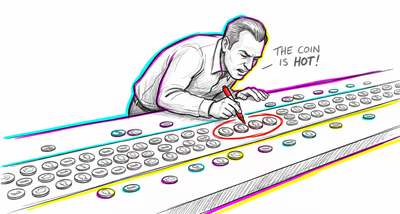

Finance & investing

Investors and analysts routinely construct post-hoc explanations for market movements ('the market dropped because of the jobs report'), creating an illusion of predictable cause-and-effect in what are largely complex, multi-variable, often random fluctuations. This leads to overconfidence in predictive models built on backward-looking narratives.

Medicine & diagnosis

Clinicians may construct tidy diagnostic narratives that prematurely lock onto a single causal explanation for a patient's symptoms, ignoring alternative diagnoses or comorbidities that don't fit the story. Patients similarly construct causal health narratives ('I got sick because I was stressed') that may lead them to pursue the wrong treatments.

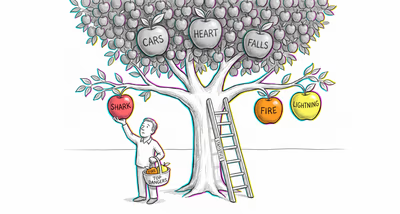

Education & grading

Teachers construct narratives about student performance ('she struggles because she doesn't try') that oversimplify the complex interplay of cognitive, social, and environmental factors affecting learning. Success stories of famous dropouts are retold as inspiring cause-and-effect tales, ignoring the vast majority of dropouts who did not succeed.

Relationships

People construct origin stories for relationships ('we were meant to be') and breakups ('we grew apart') that impose clean narrative arcs on messy, multi-causal emotional dynamics. These stories shape how they approach future relationships, often by reinforcing oversimplified lessons.

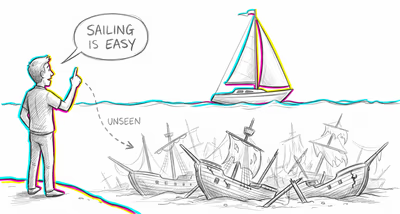

Tech & product

Product teams construct post-launch narratives about why a feature succeeded or failed, attributing outcomes to specific design decisions while ignoring market timing, competitor moves, and random user behavior. Case studies of successful products become origin myths that mislead other teams into copying surface-level strategies.

Workplace & hiring

Hiring managers construct stories about candidates based on resume sequences ('she moved up every two years — clearly a high performer') while ignoring the randomness and context behind career transitions. Performance narratives like 'he turned the department around' compress complex organizational dynamics into heroic individual arcs.

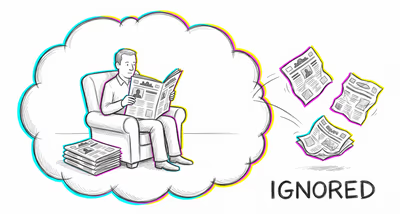

Politics Media

News media packages complex geopolitical events into simple narrative arcs with clear villains, heroes, and turning points. Voters construct cause-and-effect stories about policy outcomes ('the economy improved because of that policy') while ignoring dozens of confounding variables, regression to the mean, and the role of chance.