The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

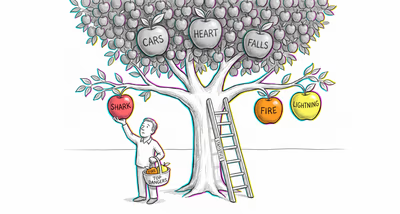

Investors and analysts tend to assign higher cumulative risk to a portfolio when individual risk factors (interest rate changes, currency fluctuation, sector downturns, regulatory changes) are listed separately than when asked about 'overall market risk.' This leads to over-hedging or excessive diversification in response to itemized risks that seem larger in aggregate than the packed whole.

Medicine & diagnosis

Patients asked about the likelihood of 'side effects' from a medication give lower estimates than patients who are presented with a list of specific side effects (nausea, headache, dizziness, fatigue). This can lead to inflated risk perception and medication non-adherence when side effects are itemized in detail on packaging or during informed consent.

Education & grading

Students estimating the probability of failing a course give a lower figure than when asked separately about failing due to poor exam scores, missed assignments, low participation, and a bad final project. This can lead to underestimation of overall academic risk when threats are considered holistically, or panic when they are itemized.

Relationships

When someone considers the general question 'Will this relationship end?' they estimate low probability. But when they separately consider incompatible life goals, communication problems, financial stress, and family conflicts, the itemized probabilities sum to a much higher figure, potentially amplifying anxiety about the relationship.

Tech & product

Product teams listing specific failure modes (server crash, data corruption, API timeout, authentication error) during risk reviews produce inflated total risk estimates compared to assessing 'system failure' as a single category. Conversely, presenting users with a single 'security' label feels less comprehensive than listing 'encryption, two-factor authentication, fraud detection, and privacy controls,' influencing perceived product value.

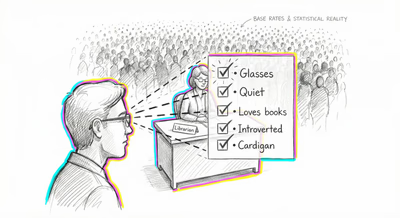

Workplace & hiring

During performance reviews, listing specific shortcomings (missed deadlines, poor communication, low initiative, weak technical skills) makes an employee's overall performance seem worse than a summary statement of 'needs improvement.' Managers who unpack negatives may unknowingly inflate the perceived severity of an employee's issues.

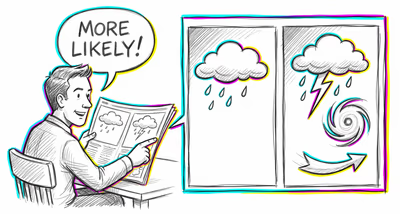

Politics Media

Political campaigns and media outlets exploit this effect by itemizing specific threats (terrorism, cyberattacks, pandemics, economic collapse) rather than referring to 'national security risks' in the abstract. The unpacked list makes the total threat seem more imminent and severe, which can be used to justify policy positions or generate engagement.