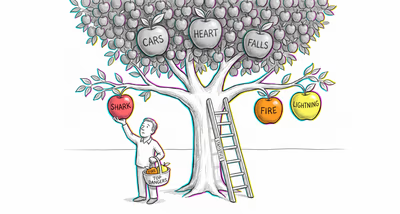

The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

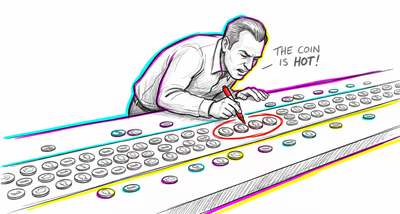

Finance & investing

Credit risk models trained on approved loan applicants may find spurious negative correlations between income and credit history quality, because applicants lacking both attributes were already filtered out during the approval process, distorting the perceived relationship between financial indicators.

Medicine & diagnosis

Hospital-based case-control studies frequently discover false protective associations between unrelated diseases because the sample only includes people sick enough to be hospitalized — the absence of one condition in a patient implies something else caused their admission, creating an artifactual inverse relationship.

Education & grading

Within selective universities, GPA and standardized test scores may appear negatively correlated among enrolled students, even though they are positively correlated in the general applicant population, because the admissions filter excluded students who scored low on both and the top scorers on both may have gone elsewhere.

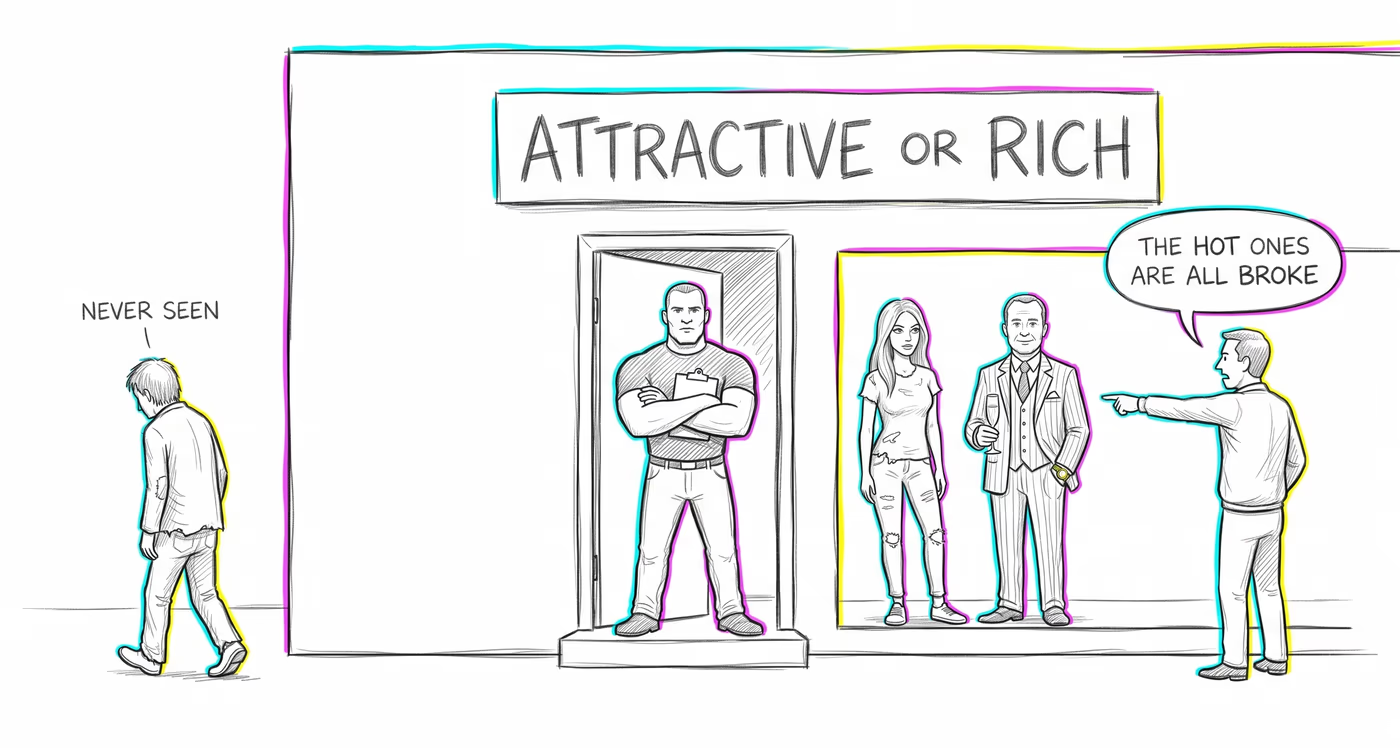

Relationships

People commonly perceive that attractive partners tend to have worse personalities because their dating pool is filtered — they never date people who are both unattractive and unkind, creating a false trade-off between looks and character within their observed sample.

Tech & product

Recommendation algorithms trained on user engagement data may learn spurious negative correlations between content attributes (e.g., popularity vs. depth of engagement) because the training data only includes content that passed a visibility threshold, excluding items that scored low on both dimensions.

Workplace & hiring

Among employees at competitive firms, technical skill and interpersonal skill may appear inversely related because hiring filters screen out candidates who lack both, while candidates exceptionally strong in both may have been recruited by more prestigious competitors.

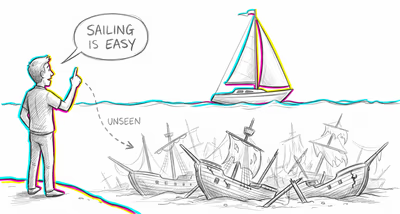

Politics Media

Media consumers may believe that politicians who are charismatic tend to be less substantive, and vice versa, because politicians who are neither charismatic nor substantive never gain enough visibility to enter the observer's awareness.