The same glitch looks different depending on the terrain. Finance, medicine, a

relationship, a team — same mechanism, different costume.

Finance & investing

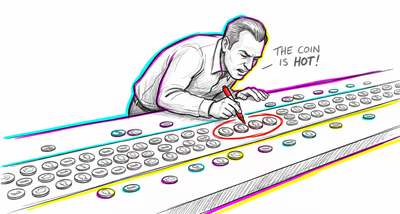

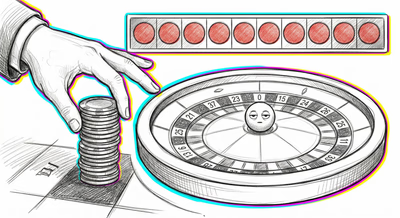

Investors frequently evaluate mutual funds or stock-picking strategies based on a few months or quarters of returns, treating short-term outperformance as evidence of genuine skill rather than recognizing that small time samples produce high variability and that streaks are expected by chance alone.

Medicine & diagnosis

Clinicians may draw strong conclusions about a treatment's efficacy from a handful of patients they've personally treated, overriding large randomized controlled trials. Similarly, patients dismiss large-scale safety data in favor of a few anecdotal adverse reactions reported by people they know.

Education & grading

Teachers form confident judgments about a student's ability from one or two early assignments, and school administrators rank programs or curricula based on test scores from very small cohorts where random variation dominates actual quality differences.

Relationships

People judge a new partner's character based on a few early interactions — a small sample of behavior — and form rigid expectations that resist updating even as more information becomes available over time.

Tech & product

Product teams run A/B tests with insufficient sample sizes and ship features based on early results that appear statistically significant, only to see the effect vanish when rolled out to the full user base. UX researchers draw design conclusions from usability tests with 3-5 participants without acknowledging the limits of such small samples.

Workplace & hiring

Performance reviews are heavily influenced by a few recent memorable incidents rather than a full year of work. Hiring committees generalize about entire universities, bootcamps, or previous employers from encounters with just a few candidates.

Politics Media

Polls with very small sample sizes are reported with the same authority as large-scale surveys. Voters and commentators treat a handful of town hall reactions or viral social media posts as representative of public opinion at large.